This is To accommodate cropping that eliminates edge artifacts produced by the model.Snapchat's augmented reality dreams might be starting to look a bit more realistic. Note: the output size will be slightly larger than the input size. (Optional) Change the input resolution to experiment with the look of different texture sizes. Create an output texture for your model by clicking the “Create Output Texture Button” on the ML Component.ĥ. This will feed input from the camera to your model as input.Ĥ. Again with the “ML Component” object selected, in the Inspector under the model’s Inputs change the value of “Texture” from None to “Default Textures/Device Camera Texture”. Then, in the Inspect on the right, change the value of Model from “None” to the model included in your project.ģ. Select the “ML Component” object from the Objects list in the top left corner of Lens Studio. You do not need to change any of these settings. Upon opening the Lens Studio project file, you will be shown a “Model Import” dialog box. Lens Studio Project Upload and Configurationġ. Rather than be redundant, to take us through implementing the model with an ML Component in Lens Studio, I’ll simply list these readme steps here.

Snapml lens studio code#

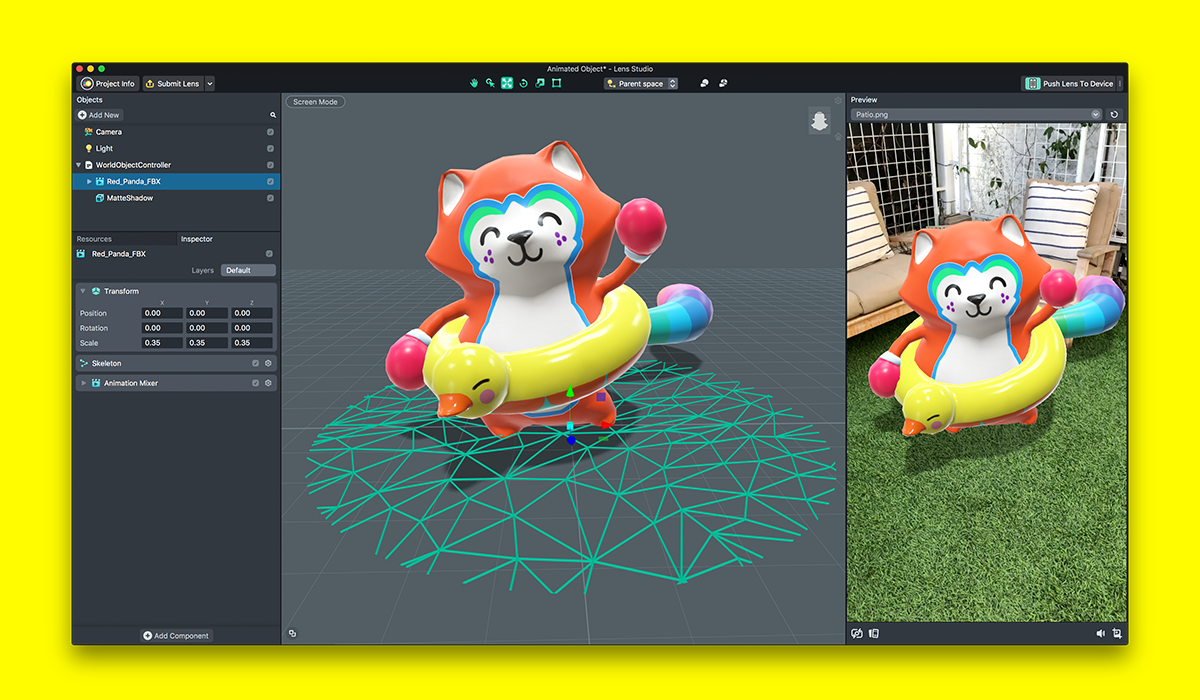

Our development platform’s new support for SnapML allows AR creators to build completely custom ML models without code and export them directly as Lens Studio template projects.

We’re trying to bridge this gap at Fritz AI. This gap represents a significant barrier to entry for many creators and creative agencies, who generally don’t have the resources (time, skills/expertise, or tools) to invest in model building pipelines. The expertise and skill sets required for ML project lifecycles are quite unique and are often mismatched with creators, designers, and others developing Snapchat Lenses. But to truly unlock the expansive potential for things like custom object tracking, improved scene understanding, and deeper immersion between the physical and digital world, creators need to be able to build custom models.Īnd that’s where there remains an undeniable limiting factor: ML is really hard. There are currently a number of really impressive pre-built templates available in Lens Studio’s official template library. With the release of Lens Studio 3.x (current version is 3.2 at the time of writing), the Lens Studio team introduced SnapML, a framework that facilitates a connection between custom machine learning models and Lens Studio. In addition to AR, the other core underlying technology in Lenses is mobile machine learning - neural networks running on-device that do things like create a precise map of your face or separate an image/video’s background from its foreground. In 2015, Snapchat, the incredibly popular social content platform, added Lenses to their mobile app -augmented reality (AR) filters that give you big strange teeth, turn your face into an alpaca, or trigger digital brand-based experiences.